From AI Studio to AI Forge

Essay • March 6, 2026

From AI Studio to AI Forge

My process is moving from collaborating with models inside a room toward building the operating machinery behind the room.

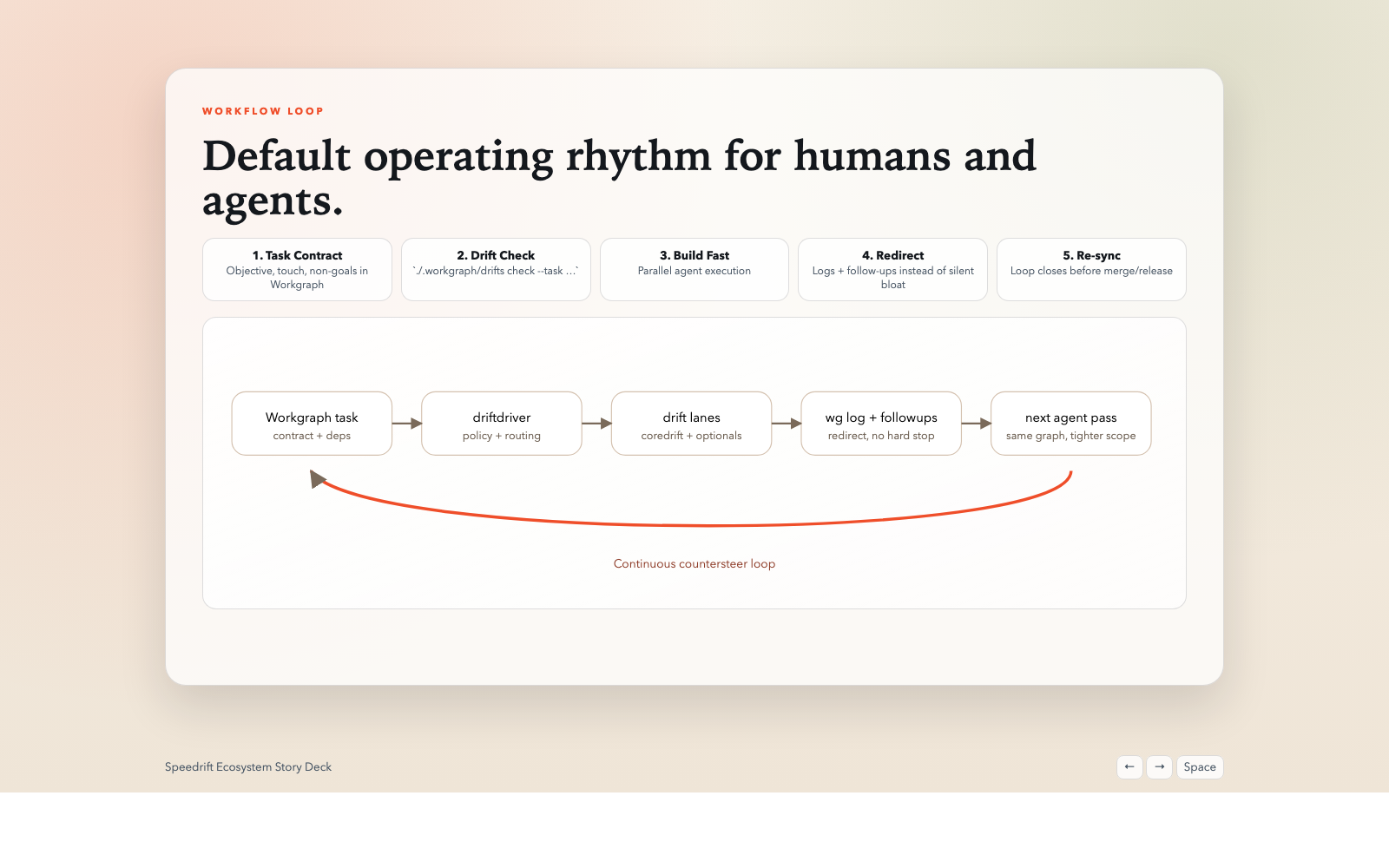

Still figuring out the right visual language for this, but this gets close.

Still figuring out the right visual language for this, but this gets close.

A while back I wrote about the idea of an AI studio. That was the right metaphor for that moment. It captured something important: I was no longer asking a chatbot for outputs; I was staging a room, directing work, managing loops, and using models more like specialists than vending machines.

But over the last few months I’ve realized the studio metaphor is starting to fall short.

The question I’m working on now isn’t just, “How do I collaborate with models well?” It’s more like: what would it look like if the room itself started to operate? Not in some magical fully autonomous sense. I don’t mean “push a button and the company runs itself.” I mean something much more bounded and much more practical: repos, workflows, checks, handoffs, and follow-up loops that keep moving, correcting, and recording with much less direct intervention from me.

That’s the shift.

I think I’m moving from AI studio to AI forge.

I should note: “AI Forge” is not a finished product name or some grand theory I’m claiming to have completed. It’s more like the least-wrong phrase I have right now for the direction my thinking is going. And, as usual with this stuff, I reserve the right to rename it again in six weeks.

If I’m honest, I think what I’m really driving toward is my own bounded dark factory. There, I said it. That phrase still sounds a little dramatic to me, but it is increasingly the direction I see in the work.

The posts were checkpoints, not contradictions

If I look back over the last year or so, I can see a pretty clear progression in how I’ve been trying to name what I was doing.

- “Vibe coding” was really about discovering that I could build at all.

- The early CLI journey and agentic model posts were about context control and process discipline.

- The workflow and business acceleration post was about tying the models into actual operating systems.

- The AI studio post was about the collaborative mental model.

- The intuition post was about how to smell when the models are going wrong.

All of those were right. None of them were enough.

What they were mostly describing, though, was my relationship to the models inside a session or a project. What they weren’t fully naming was the next layer up: the operating fabric that sits above the individual session and keeps the whole thing from dissolving into chaos.

So what’s the mental model gap now?

I think most people still think in one of three units:

- The prompt

- The agent

- The collaborator

Those all matter. But the unit that increasingly matters to me is the loop.

Not the one-shot output. Not even the single agent. The loop: observe, decide, execute, verify, record, learn.

Once you start thinking that way, a lot of the conversation changes.

The real shift in my head

The biggest change is that I’m no longer primarily asking, “Can the model do this task?”

I’m asking different questions now:

- What keeps this workflow healthy?

- Where does drift show up?

- What has to be verified?

- What is safe to automate?

- What needs a human decision versus a human glance?

- How does the system leave a trail so the next model, or the next human, can pick it up without losing the thread?

That’s a different problem. It’s less about prompting and more about governance, continuity, and return on expense.

To put it more bluntly: the value isn’t “better AI answers.” The value is lower friction in the operating system. Fewer broken handoffs. Fewer forgotten decisions. Earlier detection of stalls. Smaller costs when something breaks. Better continuity across repos, workflows, and business systems.

So the shift in my head looks something like this:

- From sessions to systems

- From output quality to workflow continuity

- From collaboration to orchestration

- From “what did the model say?” to “what does the loop do?”

- From clever prompting to model-mediated governance

That last phrase, model-mediated, is important to me, and I should probably define it more carefully than I usually do.

In the architecture work I’ve been doing, model-mediated does not mean the model directly owns side effects. It means the model owns judgment, intent, routing, timing, and interpretation. Deterministic systems own execution, evidence, and audit trails. Or more simply: the model decides what something means and what should happen next; code executes through a governed work surface and leaves artifacts behind.

That distinction matters because otherwise you get one of two bad outcomes: brittle rules pretending to be intelligence, or ungoverned model behavior pretending to be autonomy. Neither is very interesting. What I’m after is truthfulness-by-construction: if the system claims something happened, there should be work items, artifacts, or execution traces behind that claim.

What I mean by AI Forge

“AI studio” described the room.

“AI Forge” is my current attempt to describe the machinery behind the room.

A forge is not just a place where an artisan thinks beautiful thoughts. It’s heat, tools, sequence, repetition, discipline, shaping, reheating, reshaping, and quality control. Things move through it. They come out altered. There is craft in it, but there is also process.

That’s closer to what I’m after now.

If I map it to the architecture docs, AI Forge is not one monolithic super-agent. It’s closer to a stack:

- a model plane where judgment and interpretation happen

- an execution plane where deterministic systems run the side effects

- a memory plane where trajectory, identity, and working context are managed

- a governance plane where approvals, policy, and autonomy tiers live

- an integration plane where tools, MCPs, APIs, and external systems get touched

The reason I care about that split is that it keeps the roles clean. Models judge. Runners execute. Memory carries continuity. Governance defines what can happen without me. Observability tells me what actually happened.

When I say AI Forge, I mean a model-mediated operating layer that can:

- keep work moving across multiple repos and workflows

- surface drift, pressure, and dependency problems early

- emit bounded follow-up work

- preserve trail and context

- let a human stay at the level of policy, judgment, and strategic redirection more often than brute-force coordination

If the studio is where I collaborate with the models, the forge is where that collaboration starts becoming operational infrastructure.

Why Speedrift matters so much to me

This is where the speedrift ecosystem comes in, and why I keep circling back to it.

Speedrift started, in my head and in practice, much closer to a repo-local drift checker. Useful, yes, but narrower. Over time it has evolved into something much more interesting: a multi-repo, model-mediated operations system with bounded autonomy. That matters because it forces the right questions.

Not:

- Can the agent finish this task?

But:

- Which repos are healthy?

- Which ones are stalled?

- Where are the dependency bottlenecks?

- What corrective work can be emitted safely?

- What should be logged, verified, or escalated?

- How does the system improve itself without becoming opaque?

The mental model shift in the Speedrift docs is actually the mental model shift in my own head:

- repo-local lane runner -> multi-repo operating fabric

- one-off checks -> continuous cycle

- scattered logs -> narrated dashboard and ledgers

- ad hoc intervention -> bounded corrective automation

That is very close to the core of what I mean by AI Forge.

Speedrift, to me, is not just a tool. It’s one of the first real proving grounds for the dark-factory idea. Or maybe “crucible” is the better word, given the forge metaphor.

Recent work: the Speedrift story deck

I recently pulled the ecosystem into a keynote-style story deck because I wanted a visual language for the operating rhythm, not just repo docs and READMEs. If you want the current public surface area, start here:

So what do I mean by a “dark factory”?

Probably worth defining, since that phrase can sound either exciting or insane depending on your mood.

I should also say: I didn’t invent the dark-factory metaphor. I’m borrowing it from the manufacturing idea of a lights-out or dark factory, where an automated plant can run in the dark because it doesn’t need people on the floor all the time. I’m borrowing that metaphor for software and operational systems, but with a lot more caution and a lot more governance than the phrase can imply on first hearing.

I do not mean:

- no humans

- no oversight

- some magical AGI software company

- a black box making irreversible decisions while everybody sleeps

I mean something much more boring, which is exactly why I think it matters.

A dark factory, as I’m using the term, is a workflow environment that can keep itself operating, improving, coordinating, and recovering with low human intervention, while still staying inside clear policy and verification boundaries.

In other words:

- services restart when they should

- stalled work gets noticed

- broken dependency chains get surfaced

- corrective tasks get emitted

- verification gates still matter

- the system leaves a trail

- humans can step in at the right altitude

That last part is the real point. The human doesn’t disappear. The human changes altitude.

Instead of manually pushing every wheel, the human is setting policy, reviewing exceptions, making strategic calls, and deciding when the system has earned more autonomy or needs less of it.

That is a much more interesting model than either “AI assistant” or “fully autonomous agent.”

Where Light Forge Works fits

One reason I think this way so much now is that Light Forge Works sits right at the edge between the visible and the invisible sides of this problem.

In the Light Forge world this is already starting to surface in a concrete way. ForgeWorks Core is explicitly being framed as a model-mediated operational backbone: chat as the human interface, deterministic work items for side effects, approvals for critical actions, and artifacts as the thing that backs the record. That feels important to me because it means AI Forge isn’t just a blog metaphor. Pieces of it are already showing up in the architecture.

Light Forge Works is the outward-facing business. It’s the promise to clients: we can take a messy workflow, de-risk it, and build a single-purpose application or system that actually improves the way work moves.

AI Forge, if the term sticks, is the inward-facing layer. It’s the machinery I increasingly think needs to exist underneath that promise if a very small team is going to operate at a level that used to require many more people.

One way of putting it - maybe too neatly, but I think it’s directionally right - is this:

- Light Forge Works is the visible forge: the work clients see, the workflow transformation, the applications, the service layer.

- AI Forge is the operating substrate behind it: the loops, ledgers, checks, context systems, control planes, and bounded automation that make the visible work possible.

- The dark factory is the operating condition I’m aiming toward: not perfection, not full autonomy, but a system that keeps a surprising amount of itself moving.

That distinction matters because otherwise people hear this stuff and think it’s all just “Braydon likes AI tooling.”

No. I like operating systems. I like reducing friction. I like structure from complexity. The models happen to be the new material.

Visual: The shift I think I’m making

client delivery + workflow transformation"] AIF["AI Forge

model-mediated operating fabric"] S["Speedrift ecosystem

proving ground / control plane"] O["Observe"] D["Decide"] E["Execute"] V["Verify"] R["Record"] L["Learn"] Human --> AIF AIF --> LFW S --> AIF O --> D D --> E E --> V V --> R R --> L L --> O AIF --> O AIF --> D AIF --> E AIF --> V AIF --> R AIF --> L

The unit is no longer just the prompt or the agent. It's the operating loop.

Why I think this matters beyond coding

I don’t actually think the long-term impact here is mainly about software development, even though that’s where a lot of the sharpest experimentation is happening.

I think the deeper shift is that business operators - people who understand workflow, economics, customer reality, constraints, and governance - are going to be able to shape software and systems much more directly than before.

Not because code stops mattering. Code absolutely matters. Verification matters. Architecture matters. But the bottleneck begins to move.

The bottleneck becomes:

- clarity of workflow

- quality of governance

- ability to detect drift

- quality of context design

- discipline around verification

- knowing what not to automate

That is a very different landscape.

If AI Forge or things like it become real, I think the impact looks something like this:

- very small high-trust teams operating with much more leverage

- more business-native software because operators can shape it directly

- a tighter connection between strategy and implementation

- faster iteration on operational systems

- better return on expense for focused, governed automation

The part I keep coming back to is that the repo, the workflow, the CRM, the notes, the queue, the deployment surface, the logs - these increasingly start to feel like one connected operating surface rather than separate tools.

That’s the future impact I can see, at least from where I’m standing.

The honest part: I am not there yet

I should note: none of this is finished. Not even close.

There are still real problems everywhere:

- model drift is real

- context rot is real

- verification burden is still high

- UI/UX work is still painful

- broad autonomy still breaks in stupid ways

- model confidence is still a terrible proxy for correctness

And the more ambitious the loop, the more dangerous the failure mode if you don’t have strong governance.

That is why I keep returning to the boring stuff:

- test gates

- hooks

- ledgers

- explicit policies

- bounded actions

- rollback paths

- clear trail and handoff context

Without those, this is a toy. Or worse, a chaos amplifier.

So I am not arguing for surrendering judgment to models. I am arguing for designing better systems in which models can do more useful work without putting the whole operation at risk.

So what’s the takeaway?

I think the studio metaphor got me to the right place for a while. It taught me to stop treating models like vending machines and start treating them like collaborators inside a designed room.

But now I’m trying to think one layer above that.

I’m trying to understand what it means to build the forge behind the studio.

That’s where speedrift feels important to me. That’s where the dark-factory language starts to feel useful. That’s where Light Forge Works and this emerging idea of AI Forge begin to connect.

If the next year goes the way I think it might, the real change won’t be that I got better at prompting or built a few more apps.

It will be that the operating loops themselves became more coherent, more visible, more bounded, and more capable of keeping work moving.

That, to me, is the real prize.

Whether “AI Forge” turns out to be the right name for it, I don’t know yet. But it is the name that currently helps me see the shape of the thing.

And right now, that feels useful.

Process note

This draft was written with Codex helping me structure and tighten the argument while I pushed on the framing and the distinctions. It used my dossier as part of that process, which was itself derived from my writing style and my meeting transcripts, so the goal was not just to get the ideas down but to get them down in a voice that actually sounds like me. The hero image was generated with grok-aurora-cli because I wanted a visual that sat somewhere between workshop, control room, and operating metaphor.

Related posts / references

- Companion slide deck

- My 'Vibe Coding' Process ATM

- The CLI LLM Agent Journey So Far

- My agentic model at the end of June 2025

- How I'm integrating AI into my workflow for business acceleration

- The Mindset Gap: How an AI Studio Changed My Workflow

- Developing intuition for CLI-based AI coding

- Speedrift Ecosystem